Knowledge-based techniques exploit the sense inventories of knowledge resources (e.g.Unsupervised models directly learn word senses from text corpora.The main distinction of these approaches is in how they model meaning and where they obtain it from: In order to deal with the meaning conflation deficiency, a number of approaches have attempted to model individual word senses. Therefore, accurately capturing the semantics of ambiguous words plays a crucial role in the language understanding of NLP systems.Īn illustration of the meaning conflation deficiency in a 2D semantic space around the ambiguous word mouse (Dimensionality was reduced using PCA visualized with Tensorflow embedding projector).

This, in turn, contributes to the violation of the triangle inequality in euclidean spaces. In our previous example, the two semantically-unrelated words rat and screen are pulled towards each other in the semantic space for their similarities to two different senses of mouse. Moreover, this meaning conflation has additional negative impacts on accurate semantic modeling, e.g., semantically unrelated words that are similar to different senses of a word are pulled towards each other in the semantic space. According to the Principle of Economical Versatility of Words, frequent words tend to have more senses, which can cause practical problems in downstream tasks. For instance, the noun mouse can refer to two different meanings depending on the context: an animal or a computer device. However, despite their flexibility and success in capturing semantic properties of words, the effectiveness of word embeddings is generally hampered by an important limitation, known as the meaning conflation deficiency: the inability to discriminate among different meanings of a word.Ī word can have one meaning (monosemous) or multiple meanings (ambiguous). Word2Vec, GloVe or FastText ) have proved to be powerful keepers of prior knowledge to be integrated into downstream Natural Language Processing (NLP) applications.

As explained in a previous post, word embeddings (e.g. Word embeddings are representations of words as low-dimensional vectors, learned by exploiting vast amounts of text corpora. Written by Jose Camacho Collados and Taher Pilehvar The text, written in any of the 271 languages supported by BabelNet 3.0, is output with possibly overlapping semantic annotations.įor example, suppose we want to disambiguate the sentence: "Nintendo announces new details on Mario Kart 8.".How to Represent Meaning in Natural Language Processing? Word, Sense and Contextualized Embeddings It then extracts a dense subgraph of this representation and selects the best candidate meaning for each fragment. It creates a graph-based semantic interpretation of the whole text by linking the candidate meanings of the extracted fragments using the previously-computed semantic signatures. Given an input text, it extracts all the linkable fragments from this text and, for each of them, lists the possible meanings according to the semantic network.This is a preliminary step which needs to be performed only once, independently of the input text. It associates with each vertex of the BabelNet semantic network, i.e., either concept or named entity, a semantic signature, that is, a set of related vertices.

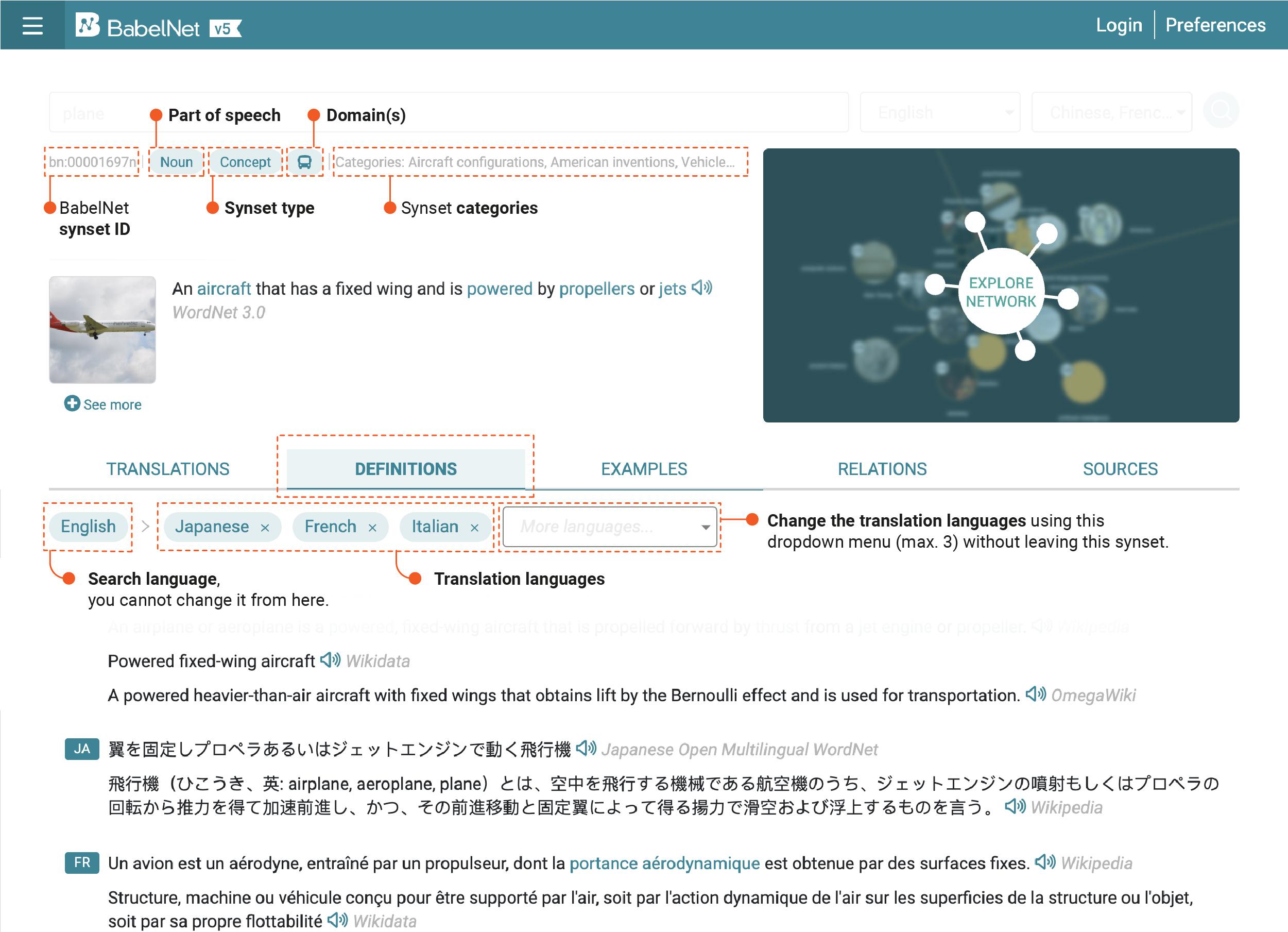

Which selects high-coherence semantic interpretations.īabelfy is based on the BabelNet 3.0 multilingual semantic network and jointly performs disambiguation and entity linking in three steps: About Babelfy is a unified, multilingual, graph-based approach to Entity Linking and Word Sense Disambiguationīased on a loose identification of candidate meanings coupled with a densest subgraph heuristic